This section shows you how to set up the archive break records feature for Pullup jobs.

Prerequisites

The following table shows the available external storage options and the requirements for each.

| Storage option | Prerequisites |

|---|---|

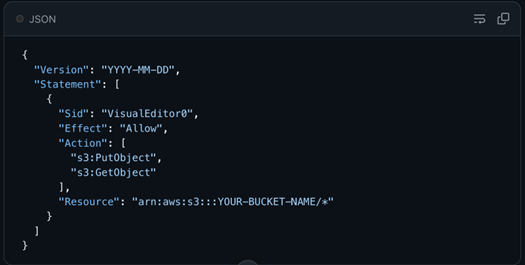

| Amazon S3 |

|

| ADLS |

|

| Azure Blob |

|

| Google Cloud Storage (GCS) |

|

Steps

- From Explorer, connect to a Pullup data source.

- Optionally, assign a Link ID to a column in the Select Columns step.

- In the lower left corner, click

Settings.

The Settings dialog box appears.

- Under the Data Quality Job section, select the Archive Breaking Records checkbox option, then click the drop-down list. A list of available external storage options appears.

- Select the external storage option to which break records will send.

- Click Save.

- Set up and run your DQ Job.

- When a record breaks, its metadata exports automatically to your external storage service.

Important If you specify a link ID, the column you assign as the link ID should not contain NULL values and its values should be unique, most commonly the primary key. Composite primary key is also supported.